Apache Spark vs Hadoop – Key Takeaways

- Apache Hadoop is an open-source framework built for distributed data storage and batch processing.

- It uses HDFS to store very large datasets across multiple machines.

- It also uses MapReduce for processing and YARN for resource management.

- Hadoop is best known for handling large-scale, storage-heavy workloads reliably and cost-effectively.

- Apache Spark is an open-source data processing engine built for speed and flexibility.

- It processes data in memory whenever possible, which improves performance.

- It supports SQL queries, machine learning, streaming, and advanced analytics.

- Spark is commonly used when businesses need faster insights from large datasets.

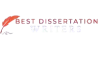

- In the Apache Spark vs Hadoop comparison, the main difference is their primary role.

- Hadoop is stronger for long-term storage and batch jobs.

- Spark is stronger for high-speed analytics and real-time-style workloads.

- Hadoop is often infrastructure-focused, while Spark is more processing-focused.

- Even though Apache Spark vs Hadoop is often discussed as a comparison, they are not always direct competitors.

- Many organizations use Hadoop for storage and Spark for analytics.

- This means they can work together in the same big data ecosystem.

Apache Spark vs Hadoop: Differences and Similarities

| Point | Apache Hadoop | Apache Spark |

|---|---|---|

| Definition | Distributed storage and batch processing framework | Fast data processing engine |

| Main strength | Scalable storage with HDFS | In-memory processing speed |

| Processing style | Disk-based, MapReduce-oriented | In-memory, fast execution |

| Best for | Batch jobs, archives, data lakes | Streaming, analytics, machine learning |

| Speed | Slower for repeated tasks | Faster for iterative workloads |

| Real-time support | Limited | Strong |

| Similarity | Open-source, distributed, scalable | Open-source, distributed, scalable |

Final takeaway: In Apache Spark vs Hadoop, Hadoop is the better fit for storage-heavy batch environments, while Spark is the better fit for modern analytics and faster processing.

Introduction to Big Data and Modern Data Frameworks

- Big data is no longer only about storing huge files. It is about how businesses process data, move data across systems, and turn raw inputs into useful insights for faster decisions.

- Today, companies deal with an enormous amount of data coming from websites, apps, sensors, transactions, logs, customer tools, and internal systems. This means traditional single-machine tools often struggle to manage volumes of data efficiently.

- Modern organizations need frameworks that support:

- reliable data storage

- scalable data processing

- quick data analysis

- flexible data pipelines

- support for both batch jobs and real-time data processing

- This is exactly where the comparison of Apache Spark vs Hadoop becomes important, as they are key examples of modern data analysis tools. When businesses start handling large data and large-scale data, they usually evaluate which framework can best support their workloads.

- The discussion around Apache Spark vs Hadoop matters because not every workload is the same:

- some teams need a cost-effective system for storing and processing historical files

- some need to stream data from live applications

- some need a powerful engine for machine learning, interactive queries, and fast analytics

- In many modern environments, the goal is not just to store distributed data, but also to move and analyze that data quickly enough to create business value.

- This is why frameworks like Hadoop and Spark have become central to the modern data platform. They help organizations manage data across clusters instead of relying on one server.

- When looking at Apache Spark vs Hadoop, it helps to remember one simple idea:

- Hadoop was designed first to solve storage and large-scale batch processing challenges

- Spark was designed later to improve speed, flexibility, and performance for more advanced workloads

- As a result, Apache Spark vs Hadoop is not only a technology comparison. It is also a comparison of how teams approach big data processing, analytics, and modern engineering needs.

What is Apache Hadoop? Understanding Hadoop Ecosystem and Hadoop Cluster

- Apache Hadoop is an open-source framework built to store and process data across many machines. It became popular because it made it possible to work with very large datasets without needing one expensive high-end server.

- At its core, Hadoop is designed for distributed computing. Instead of keeping everything on one machine, it splits files and workloads across a cluster, which helps organizations manage large data more affordably.

- The Hadoop ecosystem is made up of several important components:

- Hadoop Distributed File System for storage

- MapReduce for batch data processing

- YARN for resource management

- Hadoop Common for shared libraries and utilities

- The hadoop distributed file system is one of Hadoop’s most important features. It breaks files into blocks and stores them across multiple nodes, making the system more fault-tolerant and scalable for data storage.

- Because of the hadoop distributed file system, Hadoop can keep massive datasets available even if one machine fails. This makes it a strong choice for businesses managing high volumes of data over time.

- A Hadoop cluster typically includes:

- master nodes that manage coordination and scheduling

- worker nodes that store data and run processing tasks

- Hadoop is especially useful when businesses need to:

- archive and manage huge datasets

- run batch jobs on a schedule

- support a data warehouse or reporting environment with large historical records

- create dependable backend data pipelines

- One reason Hadoop became so influential in the Apache Spark vs Hadoop discussion is that it solved the foundational problem of distributed storage. Before faster engines became common, Hadoop gave companies a practical way to manage distributed data at scale.

- However, Hadoop’s traditional processing model writes more intermediate results to disk, which can slow performance for some modern use cases. That is why many people describe Spark as faster than Hadoop for certain workloads.

- Even so, Hadoop still plays an important role in enterprise systems, especially where durable data storage, batch reliability, and cost-effective scaling matter most.

- In short, Hadoop is often best understood as a framework and ecosystem built for storing and processing large-scale data reliably across clusters.

What is Apache Spark? How Spark Cluster Handles Modern Data Workloads

- Apache Spark is an open-source analytics engine designed for speed and flexibility. It was built to improve how systems process data, especially when teams need faster performance than traditional disk-heavy approaches.

- One of Spark’s biggest strengths is that it can work with data in memory. Instead of repeatedly writing every step to disk, Spark can keep more working data in RAM, which helps accelerate many workloads.

- This is one major reason Spark is often described as faster than Hadoop for advanced analytics, iterative machine learning, and interactive queries.

- A Spark cluster distributes tasks across many nodes, similar to Hadoop, but Spark focuses more on high-speed computation and modern workload support.

- Spark includes several important modules:

- Spark SQL for structured queries and working with tables

- Spark Streaming for handling live feeds and data streams

- MLlib for machine learning

- GraphX for graph processing

- spark sql is especially valuable for teams that want to analyze structured data using familiar SQL-like syntax. It helps connect Spark to reporting systems, BI tools, and even a data warehouse workflow.

- spark streaming supports use cases where organizations need to stream data continuously from applications, logs, devices, or event systems. This makes Spark highly relevant for real-time data processing.

- When teams compare Apache Spark vs Hadoop, Spark often stands out for:

- faster analytics

- support for streaming and iterative workloads

- easier development experience

- broader use in modern AI and analytics projects

- Spark is also effective when businesses need to join, transform, and analyze data across multiple sources in one environment. That makes it a highly flexible data platform for both engineers and analysts.

- Another advantage is how spark processes data for repeated operations. If the same dataset needs to be queried multiple times, Spark can avoid repeated disk reads and deliver results much more efficiently.

- Spark is not only about speed. It is also about versatility. Teams use it for:

- ETL and data pipelines

- dashboard support

- ad hoc data analysis

- machine learning workflows

- live event handling with data streams

- In many real business environments, Spark works best when companies want faster insight generation, especially from large data and mixed workloads.

Struggling with Your Data Analysis or Dissertation Statistics?

Get expert assistance with your data analysis projects or dissertation statistics from Best Dissertation Writers . Our team specializes in providing dissertation statistics help , ensuring accurate, well-analyzed results tailored to your research needs. Visit our site to get professional guidance and elevate the quality of your data-driven projects.

Get Professional HelpApache Spark vs Hadoop: Overview of Big Data Workloads

- When comparing Apache Spark vs Hadoop, the biggest difference is not simply which one is better. It is about which one fits the workload more naturally.

- Apache Spark vs Hadoop for batch processing:

- Hadoop is strong for traditional batch jobs over very large historical datasets

- Spark can also run batch jobs, but often completes them faster because it reduces disk-heavy operations

- Apache Spark vs Hadoop for streaming:

- Hadoop was not built primarily for live event handling

- Spark, through spark streaming, is much better suited for teams that need to stream data from apps, devices, or logs

- Apache Spark vs Hadoop for analytics:

- Hadoop supports large-scale processing, but interactive analytics can feel slower

- Spark, especially with spark sql, is more suitable for fast exploration and iterative data analysis

- Apache Spark vs Hadoop for storage:

- Hadoop remains highly valuable because of the hadoop distributed file system

- Spark is more of a compute engine, so it often works with external storage rather than replacing long-term data storage

- Apache Spark vs Hadoop for enterprise use:

- Hadoop can be ideal when the focus is affordable storage and steady batch processing

- Spark is often preferred when teams need agility, speed, and real-time data processing

- It is also important to note that spark and hadoop are not always competitors in practice. Many organizations use spark and hadoop together:

- Hadoop provides scalable storage through HDFS

- Spark runs advanced processing and analytics on top of that stored data

- In this sense, Apache Spark vs Hadoop is sometimes the wrong way to think about the decision. For some architectures, Spark complements Hadoop rather than replacing it.

- A practical way to understand Apache Spark vs Hadoop is this:

- choose Hadoop when durable storage and large batch jobs are the priority

- choose Spark when speed, flexibility, and modern big data processing matter more

- combine them when you need both scalable storage and high-performance computation

- Ultimately, the right choice depends on the workload, team skills, infrastructure, and how often the business needs to process data from growing volumes of data.

Key Differences Between Apache Spark vs Hadoop in Terms of:

Architecture and Performance

- When comparing Apache Spark vs Hadoop, the first major difference is how each platform is built at its core. Although both come from the Apache Software Foundation, they were designed with different priorities in mind.

- Hadoop is a framework built around distributed storage and batch computation. Its design focuses on storing huge files reliably and then breaking work into smaller data processing tasks that run across a Hadoop cluster.

- The main components of the Hadoop ecosystem include:

- Hadoop Distributed File System for storage

- MapReduce for processing

- YARN for cluster resource management

- Hadoop Core or Hadoop Common for shared services and libraries

- In Hadoop, data is split into a data block structure and distributed to different machines. To improve fault tolerance, Hadoop can replicate data across nodes via Hadoop Distributed File System, which helps prevent data loss when a machine fails.

- This storage-heavy design is one reason Hadoop uses a disk-based model so effectively for large amounts of data. It was built to handle petabytes of data by spreading files and workloads across many low-cost servers.

- Spark has a very different architecture. At the center is Spark Core, which acts as the main execution layer for scheduling, memory management, and distributed computation.

- In the Apache Spark vs Hadoop debate, Spark is often seen as the more modern data processing engine because Apache Spark processes data differently. Instead of repeatedly writing intermediate results as data to disk, Spark processes data in memory, which greatly reduces latency for many tasks.

- This is why Spark is generally faster than Hadoop for iterative analytics, repeated queries, and advanced computation. Where Hadoop often depends on disk I/O, Spark keeps more active data available in RAM.

- One of the clear limitations of Hadoop appears in workloads that require multiple repeated calculations. More specifically, the limitations of Hadoop MapReduce become obvious when jobs need fast feedback loops, because MapReduce writes too many temporary results to disk.

- In contrast, Spark provides a more flexible architecture for modern analytics. Spark provides support for batch, streaming, SQL, and machine learning in one environment, which helps improve development speed as well as performance.

- So, in terms of architecture and performance, Apache Spark vs Hadoop usually comes down to this: Hadoop is built for durable distributed storage and structured batch execution, while Spark is built for fast distributed computation and modern analytics.

Data Workloads Processing

- Another important part of Apache Spark vs Hadoop is how each platform handles different types of workloads.

- Hadoop was originally designed for large batch-oriented jobs. It works well when organizations need to process archived files, historical logs, clickstream records, or other massive data sets that do not require immediate results.

- In these cases, Hadoop can be used to break complex jobs into smaller tasks and run them across a distributed cluster. This makes it suitable for offline reporting, ETL jobs, and other forms of large-scale data processing.

- Hadoop is also useful when businesses need to manage large volumes of data that include structured records, text files, and some forms of unstructured data.

- Spark supports a broader range of workloads. In the Apache Spark vs Hadoop comparison, Spark stands out because Spark uses one engine for multiple purposes rather than relying mainly on MapReduce.

- For example, Spark supports:

- SQL-based analytics

- machine learning

- graph processing

- streaming workloads

- interactive queries

- This makes Spark much better for big data analytics environments where teams want to move from storage to insight more quickly.

- Spark is also highly effective for interactive data exploration, where analysts need to run repeated queries on the same data sets without waiting for long disk-heavy jobs to finish.

- Another difference is how each system handles changing workloads. Hadoop works best when the processing logic is predictable and scheduled. Spark is more flexible when workloads vary across analytics, dashboarding, event processing, and experimentation.

- So, when discussing the difference between Apache Hadoop and Spark in workload handling, the practical answer is simple:

- Hadoop is stronger for traditional batch-oriented processing of static files

- Spark is stronger for mixed and modern workloads that demand speed, flexibility, and repeated computation

Speed, Scalability, and Efficiency

- Speed is one of the biggest reasons the Apache Spark vs Hadoop discussion matters so much to modern data teams.

- Because Spark processes data in memory, it avoids much of the repeated disk access that slows traditional MapReduce jobs. That is why Spark is generally faster for many analytics-driven tasks.

- In fact, spark performs especially well when the same data must be queried multiple times, transformed in stages, or reused by analytics and machine learning workflows.

- This efficiency matters when organizations handle large volumes of data and need faster decision-making from those workloads.

- Hadoop, however, is still highly scalable. It was built from the ground up to handle petabytes of data and distribute them reliably across many machines. That core storage strength is part of what makes Hadoop valuable even today.

- In terms of raw storage scale, Hadoop remains extremely strong because it can store and organize large amounts of data efficiently through distributed file management.

- Spark scales well too, but its biggest advantage is performance efficiency for active computation rather than long-term storage alone.

- This is why, in Apache Spark vs Hadoop, many teams see Hadoop as the durable storage layer and Spark as the faster computation layer.

- Put another way:

- Hadoop excels when cost-effective storage and cluster resilience matter most

- Spark excels when processing speed and analytics responsiveness matter most

- That is also why Spark is often used in environments where fast reporting, model training, or exploratory workloads are essential.

Batch Processing vs Real-Time Data Workloads

- The final major difference in Apache Spark vs Hadoop is how each platform handles batch versus real-time work.

- Hadoop was designed primarily for batch processing. It performs well when data is collected, stored, and then processed later in large scheduled jobs.

- That means Hadoop is a good fit for nightly ETL jobs, archive analysis, and historical reporting pipelines that do not require immediate output.

- Spark can do batch work too, but it is much more flexible because Spark can run both batch and live workloads from the same platform.

- This is especially important with Spark Streaming and Structured Streaming, which allow teams to work with events, logs, and application activity as they arrive.

- In the Apache Spark vs Hadoop comparison, this makes Spark more suitable for teams that need dashboards, alerting systems, fraud checks, or live operational insights.

- Spark is ideal for real-time data workloads because it can ingest and process incoming information with far lower latency than traditional MapReduce pipelines.

- So, while Hadoop still remains useful for dependable batch execution, Spark is the stronger choice when speed and responsiveness are essential.

- In practical terms, Hadoop and Spark are two technologies that often complement each other rather than fully replace one another:

- Hadoop handles storage and batch-heavy infrastructure

- Spark handles fast computation and modern streaming analytics

- That is the real conclusion of Apache Spark vs Hadoop: Hadoop is excellent for stable large-scale storage and batch work, while Spark is better when businesses want faster analysis, lower latency, and modern processing flexibility.

When to Use Hadoop vs Spark for Big Data Workloads

- When deciding between Apache Spark vs Hadoop, the best choice depends on the kind of workload you need to run, how quickly you need results, and whether storage or processing is your bigger priority.

- Use Hadoop when your main need is reliable, cost-effective handling of very large historical datasets.

- Hadoop is especially useful when your team is working with huge archives, raw logs, and long-term enterprise records.

- It is a strong fit when jobs can run in batch mode and do not need immediate responses.

- It is also a practical choice when the system must store and manage large volumes of data across distributed infrastructure using HDFS and YARN.

- Use Spark when speed, flexibility, and modern analytics matter more.

- Spark is better suited for repeated queries, iterative machine learning, interactive dashboards, and streaming pipelines.

- It is often the better option when teams need to process the same data multiple times without constantly writing intermediate results back to disk.

- Spark also supports structured processing, streaming, machine learning, and graph workloads in one platform, which makes it more attractive for modern analytics teams.

- A simple way to think about Apache Spark vs Hadoop is this:

- choose Hadoop for storage-heavy, batch-oriented environments

- choose Spark for fast analytics, streaming, and iterative computation

- use both when you need durable distributed storage plus fast processing

- In practical terms, Apache Spark vs Hadoop is usually not a question of which tool is universally better. It is about which one matches your business goals, budget, latency expectations, and team workflow.

Examples of Use Cases for Apache Spark vs Hadoop

- Looking at real use cases makes Apache Spark vs Hadoop much easier to understand.

- Good Hadoop use cases include:

- storing very large data lakes for long-term retention

- running overnight ETL jobs on historical records

- processing large log collections for batch reporting

- supporting compliance, archival, and back-office data platforms

- Hadoop is a strong choice in these cases because the data is often huge, the jobs are predictable, and the business does not need instant output.

- Good Spark use cases include:

- real-time fraud detection

- recommendation engines

- clickstream analysis

- live dashboards

- streaming application logs

- machine learning workflows

- Spark is especially useful in these scenarios because it supports fast SQL processing, repeated transformations, and streaming computation on the same engine. Spark SQL handles structured queries, while Structured Streaming supports incremental and continuous stream processing.

- In many organizations, the best answer to Apache Spark vs Hadoop is not “only one.”

- Hadoop stores the raw and historical data in HDFS.

- Spark reads that data, transforms it, and powers analytics or streaming layers on top.

- That is why many real-world architectures treat Apache Spark vs Hadoop as a design decision about roles rather than a strict winner-versus-loser debate.

Struggling with Your Data Analysis or Dissertation Statistics?

Get expert assistance with your data analysis projects or dissertation statistics from Best Dissertation Writers . Our team specializes in providing dissertation statistics help , ensuring accurate, well-analyzed results tailored to your research needs. Visit our site to get professional guidance and elevate the quality of your data-driven projects.

Get Professional HelpUnderstanding Hadoop MapReduce vs Apache Spark Processing Engine

- One of the clearest parts of Apache Spark vs Hadoop is the difference between Hadoop MapReduce and the Spark processing engine.

- Hadoop MapReduce is built for parallel batch execution.

- It splits a job into map tasks and reduce tasks.

- It distributes those tasks across cluster nodes.

- It is reliable and fault tolerant, which is why it became foundational in early big data systems. Hadoop’s MapReduce documentation describes it as a framework for processing very large datasets in parallel on large clusters.

- The downside is that MapReduce is less efficient for workloads that require many repeated processing steps.

- Intermediate results are often written to disk between stages.

- That adds latency and makes interactive or iterative workloads slower.

- Spark takes a different approach.

- Spark is a unified engine for large-scale data analytics.

- It includes modules for SQL, machine learning, graph processing, and streaming.

- It can keep more working data in memory, which is why it is often much faster for repeated analysis tasks.

- This is the core processing difference in Apache Spark vs Hadoop:

- MapReduce is dependable for batch jobs and long-running pipelines

- Spark is more flexible for analytics, real-time workloads, and developer productivity

- So, if your workload involves repeated transformations, live data, or exploratory analytics, Spark usually feels more natural. If your workload is stable, predictable, and storage-centered, MapReduce can still do the job well.

Hadoop vs Spark: Which One is Better for Modern Data Needs?

- So in Apache Spark vs Hadoop, which one is better for modern data needs?

- For most modern analytics workloads, Spark usually has the advantage.

- Businesses increasingly want faster reporting, low-latency pipelines, streaming support, and data science workflows in one environment.

- Spark was designed with this broader scope in mind, which is why it is widely used for modern analytics and engineering tasks.

- Hadoop is still highly valuable, but its strongest role today is often infrastructure-oriented.

- HDFS remains useful for scalable distributed storage.

- YARN remains important for cluster resource management and job scheduling.

- That means the answer to Apache Spark vs Hadoop depends on what “better” means in your environment:

- better for low-cost storage and batch jobs: Hadoop

- better for fast analytics and mixed workloads: Spark

- better for a full big data stack: often both together

- For many modern teams, Spark becomes the main computation layer, while Hadoop remains the storage and resource backbone underneath.

Apache Hadoop and Apache Spark Together: Can They Work in One Hadoop Cluster?

- Another important point in Apache Spark vs Hadoop is that the two technologies can absolutely work together.

- Spark’s official documentation states that Spark uses Hadoop client libraries for HDFS and YARN, and Spark provides official guidance for running Spark on YARN.

- In practical terms, that means:

- Hadoop can provide distributed storage through HDFS

- YARN can manage resources across the cluster

- Spark can run applications on top of that same environment

- This combined setup is powerful because it lets organizations keep Hadoop’s storage strengths while adding Spark’s faster analytics and streaming capabilities.

- So, one of the most useful conclusions from Apache Spark vs Hadoop is that they are not always direct substitutes. In many production environments, Spark improves the value of an existing Hadoop cluster rather than replacing it.

Conclusion: Final Thoughts on Hadoop vs Spark and Key Differences

- Ultimately, Apache Spark vs Hadoop is not about choosing a single winner for every situation.

- Hadoop remains strong for distributed storage, resource management, and dependable batch processing at scale.

- Spark stands out for speed, flexibility, streaming, SQL analytics, and iterative processing.

- The most practical answer in Apache Spark vs Hadoop is:

- use Hadoop when storage scale and batch reliability matter most

- use Spark when fast computation and modern analytics matter most

- combine them when you need both

- For readers comparing tools for current business systems, that is the real takeaway: Hadoop built the foundation of big data infrastructure, while Spark pushed that foundation toward faster, more modern data work.

References

- Data Analysis Guide – Georgetown University Library – https://guides.library.georgetown.edu/data-analysis

- 5 Key Reasons Why Data Analytics Is Important for Business – University of Pennsylvania – https://lpsonline.sas.upenn.edu/features/5-key-reasons-why-data-analytics-important-business

- 10 Easy Data Analysis Methods and Techniques – Isenberg School of Management – https://iconnect.isenberg.umass.edu/blog/2024/06/06/10-easy-data-analysis-methods-and-techniques/

- Using Data for Analysis – Georgia Tech Library – https://libguides.library.gatech.edu/usingdata/analyzing-data